About me

I am a PhD student in the lab of Friedemann Zenke at the Friedrich Miescher Institute, where I work in computational neuroscience and focus on understanding spiking neural networks.

I completed my master’s degree in biomedical engineering at ETH Zürich. During my master’s I worked on a biologically plausible implementation of an autoencoder in spiking neural networks with Matteo Cartiglia in Giacomo Indiveri’s lab at INI, Alzheimer’s prediction from MRI data using probabilistic graphical models with David Brüggemann in the biomedical image computing group of Ender Konukoglu, and I wrote my master’s thesis in Benjamin Grewe’s lab with Alexander Meulemans and Matilde Tristany Farinha on minimum norm optimization.

Before that, I received a bachelor’s degree in electrical engineering and information technology from ETH Zürich. During my final year, I was a member of the Cardex focus project team. We developed a transcatheter mitral valve repair simulator as a novel training technology for cardiovascular interventions (see Zimmermann et al, 2021, Supplementary Material 3 and 4 for a video of our cardex simulator in action).

Education

Friedrich Miescher Institute | University of Basel

ETH Zürich

ETH Zürich

Featured publications

News

2026

- 2026-03-14: Poster at COSYNE 2026 - Are excitatory-inhibitory assemblies an ideal substrate for efficient multi-purpose representations?

- 2026-03-06: Received an Outstanding Reviewer Award 2025 from Neuromorphic Computing and Engineering for my reviews in 2025

2025

- 2025-11-05: Flash talk and poster at SNUFA 2025 - Emerging assembly structures in trained spiking neural networks

- 2025-09-30: Poster at Bernstein Conference 2025 - Emerging assembly structures in trained spiking neural networks

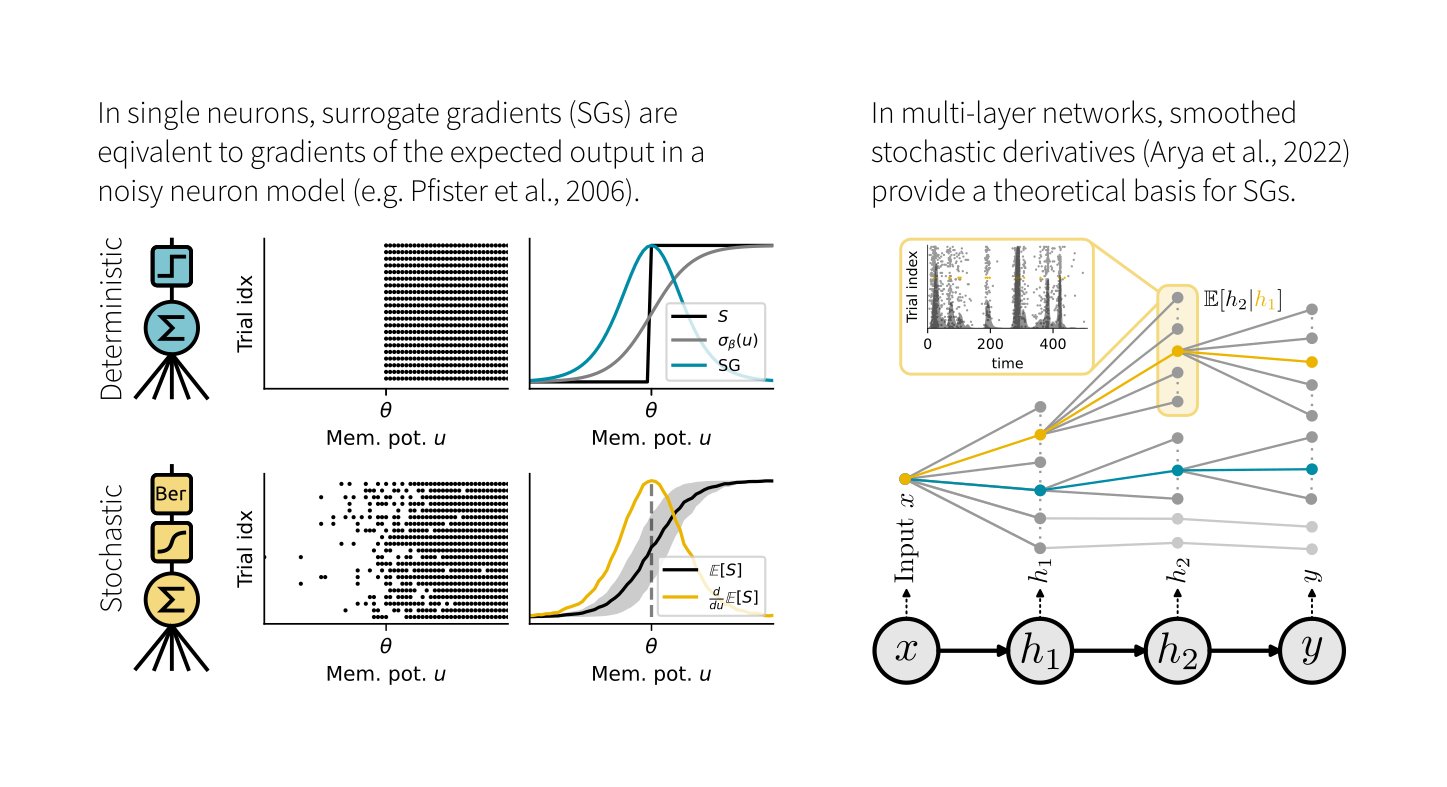

- 2025-03-20: Our paper Elucidating the Theoretical Underpinnings of Surrogate Gradient Learning in Spiking Neural Networks was published in Neural Computation

2024

- 2024-11-05: Session chair at SNUFA 2024

- 2024-10-26: Tengjun Liu, Julian Rossbroich and I won first prize at the IEEE BiOCAS 2024 neural decoding challenge for our work on efficient cortical spike train decoding with recurrent spiking neural networks achieving the best trade-off between decoding accuracy and computational cost.

- 2024-10-01: Poster at Bernstein Conference 2024 - Efficient cortical spike train decoding for brain-machine interface implants with recurrent spiking neural networks (joint work with Tengjun Liu and Julian Rossbroich)

- 2024-09-20: Tengjun Liu, Julian Rossbroich and I won the Ruth Chiquet Prize for our work on efficient cortical spike train decoding with spiking neural networks. The Ruth Chiquet prize is awarded to honor research ingenuity.

- 2024-09-12: Invited talk at the Control Theory Group of Prof. Mustafa Khammash, ETH (D-BSSE) - Training spiking neural networks: Relating surrogate gradients, probabilistic models, and stochastic automatic differentiation

- 2024-09-03: Our new preprint Decoding finger velocity from cortical spike trains with recurrent spiking neural networks as a submission to the IEEE BiOCAS 2024 Grand Challenge on Neural Decoding is available on arXiv (joint work with Tengjun Liu and Julian Rosbroich)

- 2024-08-30: Talk at Giessbach@Rasses 2024 meeting - Building plausible spiking neural network models in-silico

- 2024-04-24: Our new preprint Elucidating the Theoretical Underpinnings of Surrogate Gradient Learning in Spiking Neural Networks is available on arXiv

2023

- 2023-11-07: Flash talk and Poster at SNUFA 2023 - Comparing surrogate gradients and likelihood-based training for spiking neural networks

- 2023-09-27: Poster at Bernstein Conference 2023 - A comparative analysis of spiking network training with surrogate gradients and likelihood-based approaches

- 2023-06-09: Poster at Early-Career Researchers Symposium 2023 in Lugano

- 2023-02-01: Talk at Swiss Computational Neuroscience Retreat - Insights into spiking network training: Influences of initialization and neuronal stochasticity

2022

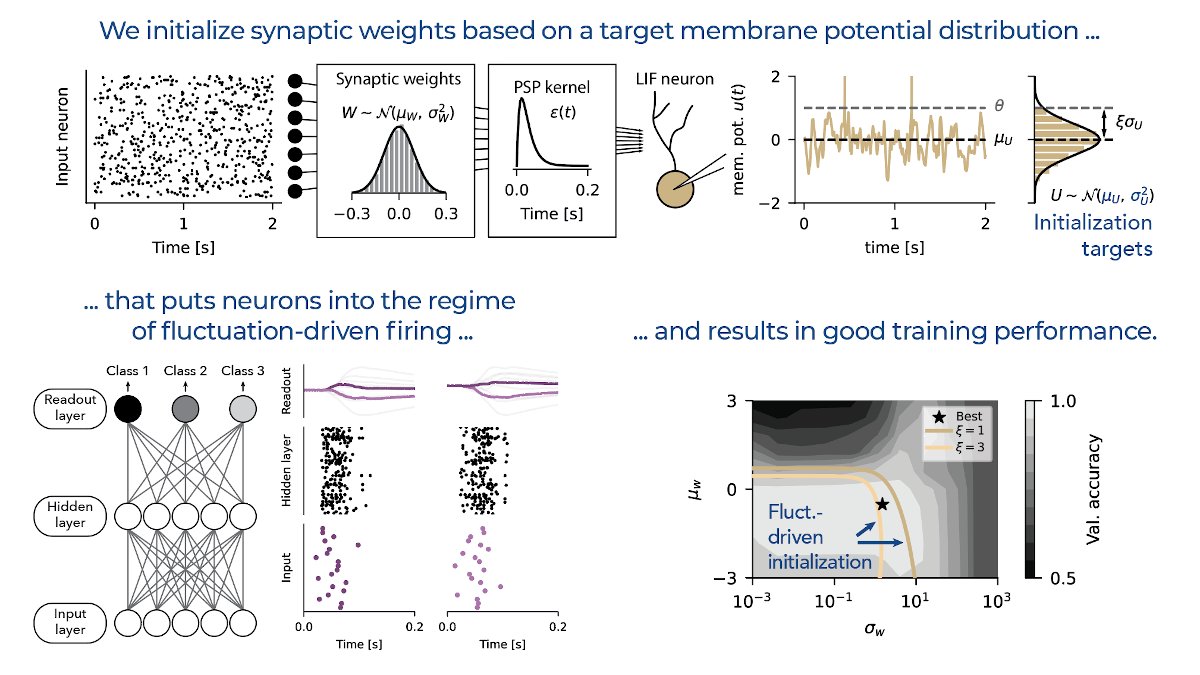

- 2022-12-31: Our paper Fluctuation-driven initialization for spiking neural network training was selected as one of the Highlights of 2022 in the IOP Neuromorphic Computing and Engineering journal

- 2022-12-08: Our paper Fluctuation-driven initialization for spiking neural network training was published in Neuromorphic Computing and Engineering (joint work with Julian Rossbroich)

- 2022-09-14: Poster at Bernstein Conference 2022, joint work with Julian Rossbroich - Fluctuation-driven initialization for spiking neural network training

2021

- 2021-11-03: Talk at SNUFA 2021 - Optimal initialization strategies for deep spiking neural networks